80/20

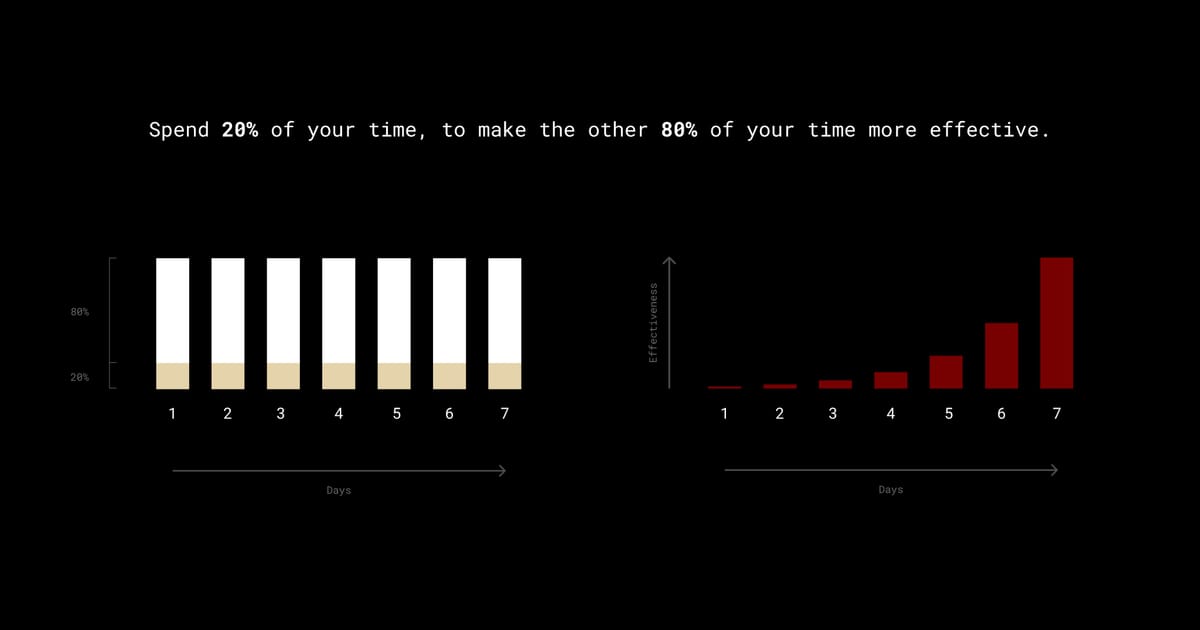

Spend 20% of your time, to make the other 80% of your time more effective.

No, this will not be a piece about turning you into the ultimate human robot, squeezing every second out of a task in order for you to cram ever more tasks in your schedule until you collapse.

It's also not about saving or making you a lot of money (it could be a byproduct eventually, though).

Space to Breathe

It's essentially about freeing time, trying to find space to breathe, think, play, learn, go on a hike, build your mind and body, build relationships, and spend more time with our loved ones.

80/20 in this context also isn't just about the Pareto principle. The 80/20 principle I want to talk about is related to Pareto, but applied differently.

The Pareto principle states that for many outcomes, roughly 80% of consequences come from 20% of the causes (the “vital few”).[1] Other names for this principle are the 80/20 rule, the law of the vital few, or the principle of factor sparsity

- Wikipedia

In Computing some examples listed are:

For example, Microsoft noted that by fixing the top 20% of the most-reported bugs, 80% of the related errors and crashes in a given system would be eliminated.[16] Lowell Arthur expressed that "20 percent of the code has 80 percent of the errors. Find them, fix them!"It was also discovered that in general the 80% of a certain piece of software can be written in 20% of the total allocated time

- Wikipedia

If the 80/20 rule has such an impact, we should apply the rule more deliberately.

I strongly value my time. It's scarce. It's finite. (Others are working on fixing this.) I therefore strongly dislike to "waste" time.

(Please note: I'm not trying to tell you what "wasteful time" is and isn't. Everyone should make up their own mind on what they consider wasteful time. For instance, I like to ride my motorcycle, while others think it's a waste of time.)

Essentially, I'm speaking about tasks that I don't enjoy working on over and over again. I don't care if a tasks needs to be done once and then never again. I'll do it, forget it and happily live on. But if it's a recurring task, especially one of those seemingly mundane ones, it probably has potential for some optimization.

(If it is a "painful" - i.e. costly, time consuming, complex, error prone,... - task, and lots of people have the same "pain", then the solution to process/resolve the task could even be turned into a business. Arvid Kahl has written extensively about this.)

80/20 - but more deliberately

Spend 20% of your time, to make the other 80% of your time more effective.

In other words, allocate 20% of your time and look for ways to improve the process for an existing task or solution. If we develop a routine to do this, two main patterns will emerge:

- Continuous improvements through iterations: We get used to work in iterations!

Since we only want to use 20% of our time to improve things at a time, we can't make it perfect the first time or in one go. That's ok! Stick to the 20%, make the process just a little bit better today. Then a little bit better tomorrow. Again and again. Iteration after iteration. Until there isn't any room left for further improvements (or time required is not worth anymore to further improve the process). - Compounding effect: All those iterations add up and the compounding effect starts to kick in and at some point and things will start to improve almost exponentially.

Take the 20% as a guideline. Of an 8 hour work day, 20% are roughly 1,5 hours. It doesn't need to be applied to a whole day at once. We also don't need to strictly use 20% all the time, every day. Sometimes it's enough to just use 5%, or 10%. Sometimes we just don't have any time at all and need to get things done not leaving us the chance to improve much of the process itself.

Sounds good, some ideas?

Yes! I work in Software and tech. So ideas and examples will be heavily influenced by this field. But I'm sure they can be applied to many more areas. Especially if your work involves a computer on a regular basis.

Refactoring

Get used to refactor things.

When developing software, we write code. We solve a problem. We are happy with the result. However, as we write code, we introduce complexity. This complexity needs to be managed. Refactoring helps to reduce complexity. We may not be happy with how the code is structured. We recognize patterns in the code which we've already seen somewhere else in the code base. Or we recognize our new code is hard to read and understand, hard to use, hard to extend,... We refactor the code. When we're done with refactoring, we want the code to produce the same results as before (a good test suite is helpful here).

The outcome doesn't change. Only the underlying implementation changes. Don't let anyone tell you refactoring is a waste of time, because "no new business value" was introduced. It's important and should be done often.

The newly refactored code might not add any new obvious features, yes, but it will improve the code base as a whole. Maybe we got rid of duplicated code and logic. Maybe we could abstract a pattern we see over and over again which then provides a generic solution for further applications (thus, making upcoming feature requirements easier and faster to fulfil). New, simple and reusable components emerge. Maybe we got rid of unnecessary complexity. Maybe we improved stability and performance in the process. Maybe we got rid of bugs. Maybe even an idea for a whole new service emerges, opening up new business opportunities? Maybe the refactoring just got rid of some typos. That's ok! The code base is still better of now than before!

By applying consistently 20% of your time to refactor existing code (if necessary), we're trying to improve the code base continuously and make the future 80% of our time more efficient.

Refactoring things helps, a lot!

Reduce barriers

Sometimes a tasks is hard to perform just because there are too many barriers we need to pass before actually getting to execute a task itself.

If we can find a way to break down those barriers, we eventually get to a point where the task itself gets easier to begin with. It doesn't make the task itself faster. But the overall time it takes is then shorter (since we reduced or got rid of the overhead).

In some form or another, reducing barriers is related to refactoring. In my opinion, refactoring can also be applied outside of Software Engineering (although we probably tend to call it differently, e.g. "rework"). Essentially, refactoring means to make parts of an existing process or solution easier to use, reuse with other things, or prepare the existing solution to easily fulfil upcoming requirements. Reducing barriers is just one way to refactor things.

So, for example, if you want to work on your body and fitness level (and you aren't a sport junky like I am not a sport junky), reduce all barriers stopping you from starting a workout. Make it easy to start. Make it hard to say "no, not now".

Robots and Digitalization

Robots are already here to help us, and they'll stay. We often think of them as big clunky machines at production lines. Or the robots which we were promised a long time ago, helping us in our households, cooking and cleaning for us, bringing us cooled drinks. Servants in mechanical form, basically. I'm not talking about those kinds of robots. The robot's I'm talking about are much smaller and they existed for a long time already.

We're getting back to code. It's little programs and services. Tools and services which can be connected to perform periodic tasks in an automatic fashion.

Digitalization has only just begun. And to make one thing clear. Digitalization is not pushed by ever more intelligent computers.While there are certainly some really astonishing and important AI projects out there, outperforming us humans on many levels and leaving us puzzled, it's not the main driver for digialization or Industry 4.0.The main driver for Digitalization is to prepare work and processes in such way, that a computer can now do them too. It's essentially "dumbing things down" so that a computer can perform the tasks.

Tools and services like IFTTT, Zapier, Webflow, Airtable (just to name a few along other "No-Code" services) are some of your helpful robots allowing you to build pipelines performing various tasks. Open Source alternatives exist for almost any service. You could run your robots on your own servers (at e.g. Digital Ocean, or on a Raspberry Pi).

In a task, an event occurs. The event triggers a pipeline you've set up. The pipeline can transform possibly incoming data. Different parts of the pipeline prepares or creates data for the next part of the pipeline. Preparing data can mean simply transforming data from one format into another format (e.g. a CSV file to JSON, the next part enriches the JSON with additional data), storing data, or triggering other pipelines and services (e.g. calling Webhooks on other services). Certain rules can be created, specify what the pipeline should or should not do (e.g. only do something if it is the last monday of a month).

So, if you find yourself in a position where you have to do a task once every day, month, or even year, think about automating it. You know what steps you'll do over and over again (this already represents your pipeline). Why/when do you decide to start doing the task? That's your event and a rule. Does it involve different steps from start to end? That's the different parts of the pipeline. Do you already transform data from one format to another? Is it always the same and can be easily automated? Or do you need to refactor some parts of the workflow before it can be automated?

Breathe

Surely, not everything can or should be fully optimized or fully automated. Some things we do just for the joy of doing it. If you love reading books, there's probably no point in optimizing reading books. But what we can think about is, how we can optimize other tasks so that we end up having more time for reading books.

Your Turn

Learning new things is more fun together!

What are some ideas you can think of spending 20% of your time at?

Feel free to share and discuss this, or send me a DM on Twitter!

If you like pieces like this in the future, feel free to sign up!